How can trust be built among the public regarding the effectiveness and reliability of AI in Healthcare?

Explore the pivotal role of trust in healthcare AI. Discover strategies to foster confidence and understand the transformative impact of AI in healthcare.

There is no doubt that the Healthcare industry is transforming thanks to artificial intelligence (AI). The article, by Rose Velazquez and reviewed by Artem Oppermann, explores 39 examples of how AI is improving the future of medicine. However, I believe the degree of confidence clinicians, patients, and the general public have in AI technology will determine its uptake and efficacy in the healthcare industry.

AI has the potential to significantly improve healthcare quality and lower costs, helping both developed and less developed countries. However, patients' comprehension and acceptance of AI are prerequisites for fulfilling this promise. To overcome the inherent challenges of integrating AI in healthcare, clinicians and patients must understand how AI functions to put their trust in it.

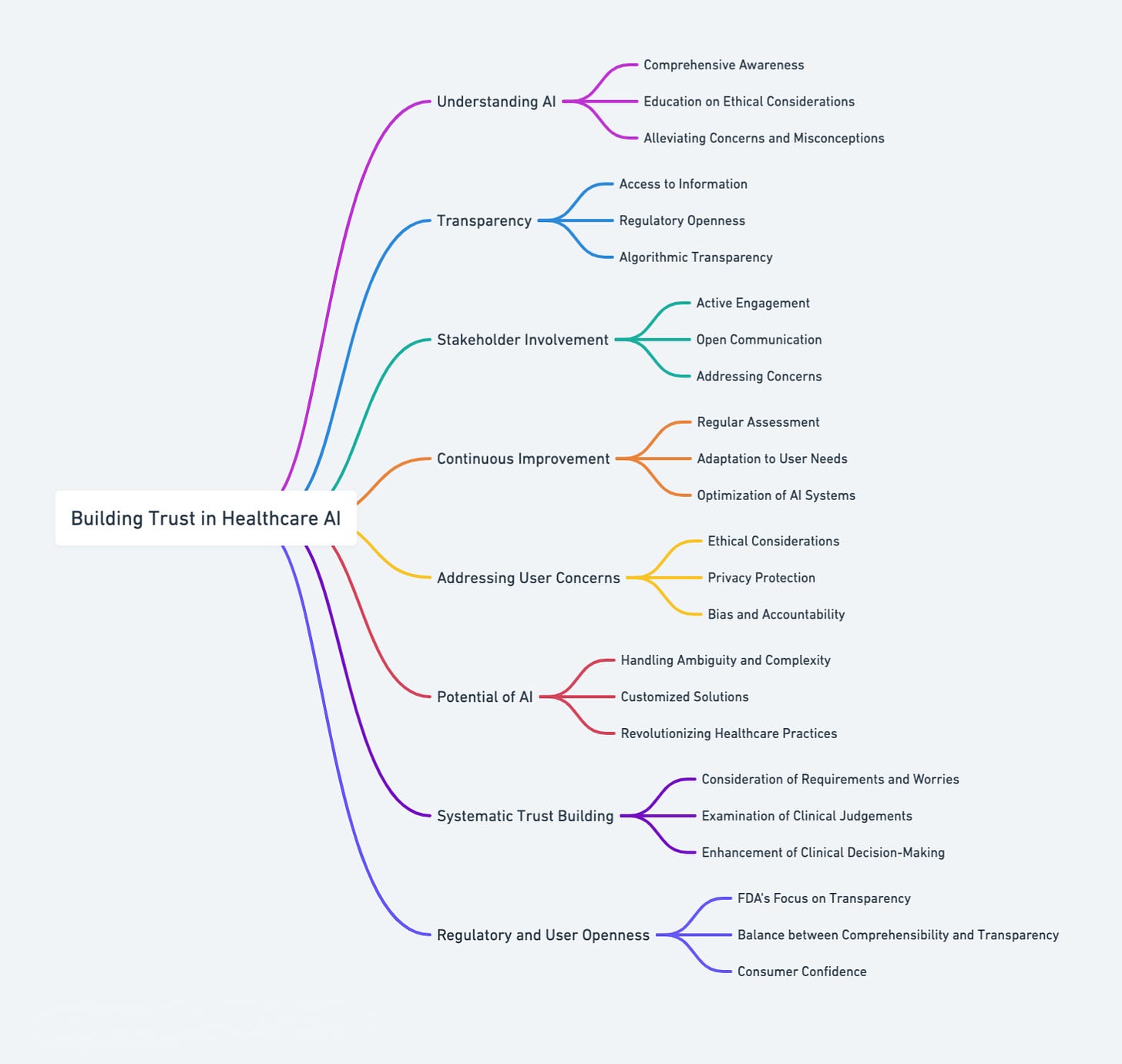

The growth of healthcare confidence in AI is a systematic process that necessitates close consideration of the requirements and worries of medical professionals as well as patients. A thorough examination of how AI affects clinical judgements is necessary to pinpoint and implement methods that can increase confidence in machine learning models. This trust is essential for the smooth integration of AI into medical procedures, which allows it to support and enhance clinical decision-making processes efficiently.

Building confidence in AI for healthcare also requires enabling and involving stakeholders. In order to address stakeholder concerns and consider their insights, stakeholder engagement is crucial during the development and deployment phases of AI systems. By ensuring that AI is in line with user values and demands, this interaction not only helps with the acceptance of AI but also improves its efficacy and dependability.

Building trust in AI/ML-based products used in healthcare requires transparency. To control this technology, regulatory organisations like the US Food and Drug Administration (FDA) are concentrating on user openness. But it's unclear if consumer confidence can be increased only through transparency or if algorithm explanations would be disregarded. To guarantee that AI is clear and easily understood by its users, it is imperative to strike the correct balance between comprehensibility and transparency.

Improving AI trust is crucial to enhancing healthcare decision-making procedures. Since doctors are (substantively) the primary users of AI systems at the moment, it is essential to consider the variables influencing their trust in the technology when developing any AI system intended for use in a clinical setting. Enhancing the overall effectiveness of AI in clinical contexts requires addressing the crucial trust-related difficulties to maximise the interaction between clinicians and AI.

AI's ability to handle ambiguity and complexity in data, producing practical, if not perfect, clinical conclusions or suggestions, is clear evidence of its potential to revolutionise healthcare practices. AI can revolutionise healthcare by providing actionable solutions and customising each patient's unique demands and conditions. Making the most of AI's advantages while accepting its flaws can help clinicians make better-informed and efficient decisions, enhancing healthcare.

Increasing healthcare trust in AI is a continual process that calls for the coordinated efforts of users, developers, regulators, and healthcare professionals. It is not a one-time event. It's a journey that requires ongoing assessment and improvement of AI systems to fulfil users' changing requirements and expectations while guaranteeing their efficacy, safety, and dependability. We can fully utilise healthcare AI and create a more informed and healthy world by promoting a culture of trust and understanding.

The foundation for establishing trust in AI in healthcare is understanding it. Patients and healthcare professionals must be thoroughly aware of AI's functions, possibilities, and constraints. To allay concerns and misconceptions and promote confidence and trust in AI technology, clinicians and patients must get education regarding AI's ethical considerations, applications, and functionalities.

Transparency gives people easy access to information about how artificial intelligence functions, how decisions are made, and how data is handled. Regulatory openness protects users' rights and interests by ensuring AI technology abides by ethical norms and existing laws. Algorithmic transparency enhances the accountability and dependability of AI by enabling people to comprehend the decision-making process.

Involving stakeholders is crucial to fostering confidence in healthcare AI. Throughout AI systems' development and implementation stages, it entail actively involving and enabling all stakeholders, including physicians, patients, developers, and regulators. By encouraging candid communication, constructive criticism, and teamwork, this involvement guarantees that AI solutions meet users' requirements, expectations, and values. Additionally, it makes it possible to recognise and resolve problems, raising the acceptance and efficacy of AI in healthcare.

Constant AI system improvement is essential to preserving and improving public confidence in AI in healthcare. It entails continuously assessing and improving AI technologies to guarantee their relevance, accuracy, and dependability. Thanks to continuous improvement, AI systems can be optimised and adjusted in response to changing user requirements, new technology developments, and emerging issues. It ensures that AI technologies continue to be useful, safe, and effective in the constantly evolving field of healthcare.

Considering users' concerns is essential to establishing healthcare AI's credibility. It entails identifying and addressing problems with accountability, ethics, privacy, and bias. By addressing ethical issues, it is possible to ensure that AI technologies are created and applied in a way that respects the rights and dignity of users and is equitable and just. Consumers' private information is protected against misuse and unauthorised access by addressing privacy concerns. By addressing issues with accountability and prejudice, AI systems are made more credible and trustworthy by being unbiased, fair, and accountable.

Developing trust in healthcare Artificial Intelligence is a complex undertaking that includes improving comprehension, openness, participation, and governance. It can enhance the best possible integration and application of AI in healthcare and fulfil its potential to revolutionise medical procedures and improve patient outcomes by attending to the demands and concerns of all parties involved and concentrating on the ongoing enhancement of AI systems.

Sources: NCBI, Healthcare IT News, PubMed, Harvard Business Review, Harvard Business Review, LinkedIn, Petrie-Flom Center Blog.

I so often see articles on Trust in digital systems, and they all fall into the same trap of focussing on one leg of the three legged stool that fosters trust. Transparency. Even then it deftly avoids the key patient right - to under stand 'limitation of purpose' when providing their data into this AI machine. Your article like so may others ignores the other 2 really hard elements:

1. Control - clarity as to how control of information is shared between recipient and provider, where is the balance of empowerment in this action?

2. Accountability - where is the empowerment for me to hold someone(the AI?) accountable when/if things don't go right?

Without these 2 legs built as strongly as full transparency, trust will never be achieved in a sustainable way - transparency will get you to early adopter engagement, but no further.